TL;DR Most tools that claim to predict post performance tell you what’s in a post — they can’t tell you why it would resonate. The Prediction Optimization Agent closes that gap by converting the image, caption, and influencer metadata into a single natural-language description that captures tone, credibility, and visual cohesion the way a strategist would. It then forecasts engagement, measures its errors, and autonomously rewrites its own instructions to produce better descriptions next time — with no human prompt engineering required. The result is a system that doesn’t just predict what will perform, but allows creative teams to draw insights on what makes a post tick — and what doesn’t.

Introduction

Influencer marketing is a multi-billion industry, yet many spending decisions still rely heavily on intuition. Brands pick creators by follower count, approve content by gut feel, and learn what worked only after the budget is gone. The cost of a wrong bet is not just wasted spend. It is missed revenue, lost momentum, and a creative team back at square one.

Everyone in the industry wants the same thing: a way to know, before publishing, whether a post will perform. But the tools built to answer that question often disappoint. They extract surface features like hashtag counts, color palettes, and face detection, then output a score no one can interpret or trust. They tell you what is in a post but nothing about why it would resonate.

The gap is not technical. It is perceptual. What makes a post work is something a skilled marketer can often articulate after the fact: the influencer’s tone felt effortless, the product placement didn’t interrupt the narrative, the caption hit a cultural nerve. These judgments require understanding context, intent, and audience, not just pixels and metadata.

This is the problem the Prediction Optimization Agent was designed to close. Rather than extracting features and producing an opaque score, the agent reads a post the way a strategist would. It examines the image, the caption, and the influencer’s history, then writes a structured natural-language description of everything that contributed to the post’s performance. A creative director can then read this description, challenge it, and act on it without ever needing to trust a black box.

The intuition is simple: the description that best predicts performance is, by definition, the description that best explains it. The agent iteratively refines this description by diagnosing its own errors, identifying what the previous descriptions failed to capture, and rewriting its own instructions to self-improve. Over successive iterations it converges on the specific qualities that actually drive engagement, not because anyone told it what to look for, but because it is guided by the minimization of its own predictive error.

The Anatomy of a Viral Post

Predicting the performance of an ad or a social media post before publishing — and certainly before spending money to boost it — Predicting the performance of an ad or a social media post before publishing — and certainly before spending money to boost it — remains a primary objective for marketers and influencers alike. But how do you distill something as complex and subjective as a social media post into a single prediction?

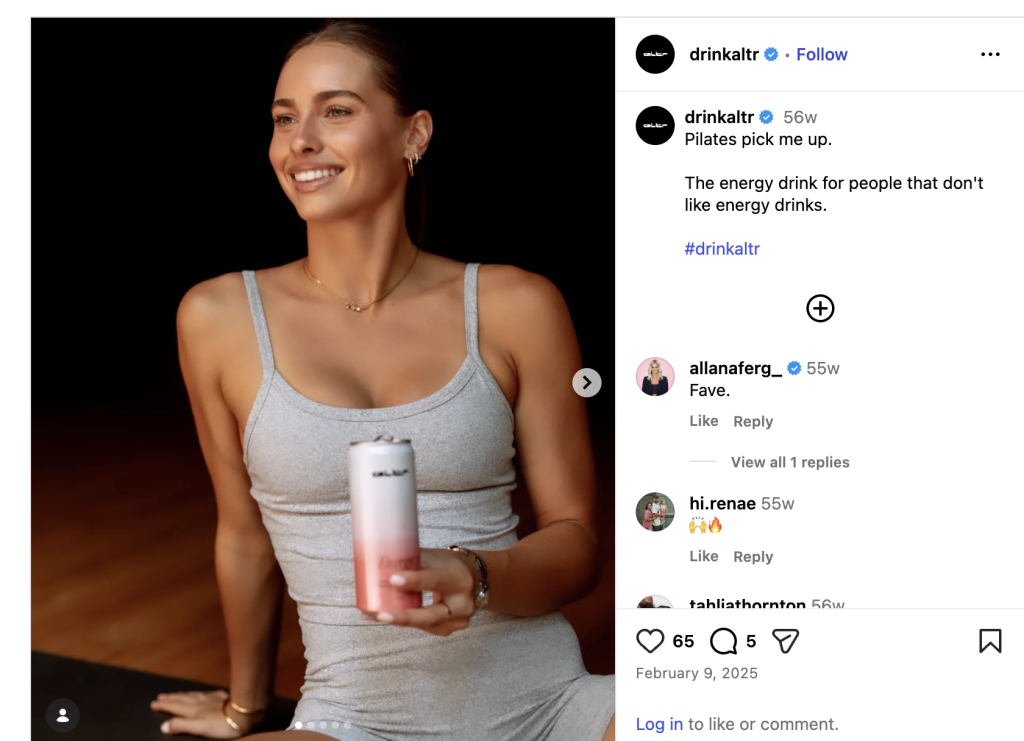

Consider a typical Instagram post. It is never just a picture. It’s a complex combination of different data types working together simultaneously. Take the influencer post shown in Figure 1. To truly understand why this post succeeds or fails, you need to consider:

- The image itself — composition, lighting, color palette, subjects, products, and setting.

- The caption — where the influencer might share a discount code, crack a joke, or strike an emotional chord.

- The influencer’s identity — their bio, follower count, niche credibility, and historical performance.

- The metadata — the time of day, geographic location, hashtags, and platform-specific context.

Each of these dimensions carries signal. None of them tells the full story alone. The magic — and the difficulty — lies in how they interact.

Figure 1: A typical influencer post. Traditional analytics struggle to measure the combined impact of the visual aesthetic, the caption’s tone, and the underlying metadata. To accurately predict engagement for a post like this, our system analyzes the image, caption and influencer statistics together as a single cohesive unit.

Why Traditional Approaches Fall Short

Historically, the industry has tried to solve this problem by breaking a post into its component parts and analyzing each one in isolation. Engineering teams would string together separate, disjointed machine-learning models — each responsible for a single modality — and attempt to fuse their outputs at the end:

- Standard Computer Vision Models: Isolated image-recognition algorithms scan the visual to detect objects, people, or products. Separate models handle face detection and emotion recognition. The output is a list of labels: “person detected,” “beverage detected,” “outdoor setting.”

- Text Analyzers & OCR: NLP tools parse the caption — counting hashtags, flagging emojis, scoring sentiment. Meanwhile, optical character recognition (OCR) software reads any text visible within the image itself.

- Tabular Metadata Algorithms: A statistical algorithm ingests structured fields — follower count, posting time, engagement history — and produces its own independent prediction.

Engineers then attempt to fuse these isolated outputs into a single forecast. But this approach has a fundamental, structural flaw: it cannot capture meaning that emerges from the interaction between modalities.

Consider a concrete example. Imagine a fitness influencer posts a photo of herself laughing mid-sip from an energy drink, with the caption: “My face when someone says they don’t need pre-workout 😂.”

A traditional computer vision model would tag this as: “person detected,” “beverage detected,” “outdoor setting,” “positive facial expression.” A separate text analyzer would count the hashtags and flag the emoji. But neither system — nor any crude fusion of their outputs — would understand the joke: that the humor comes from the caption reframing the laugh as a reaction, turning a standard product shot into a relatable meme.

It would also completely miss that this influencer is a certified nutritionist — meaning her credentials paired with an energy drink carry implicit credibility that a fashion influencer holding the same product would not.

Our Approach: Unifying Multimodal Data Through Semantic Translation

To address this limitation, we developed the Prediction Optimization Agent, a self-improving AI agent that moves beyond traditional approaches by unifying all available data into a single format it can reason about: natural language.

The agent’s core mechanism is deceptively simple but profoundly effective. It takes complex, disparate multimodal data — numerical metrics, images, video, and text captions — and converts everything into a single natural-language paragraph that holistically describes the post’s content, aesthetic, tone, and context. By projecting all of these distinct formats into readable text, heterogeneous data is normalized into a structure that a language model can process as a unified whole.

Instead of treating image and text as separate inputs, the agent uses a single prompt to digest all available information at once. Multimodal LLMs serve as one of the agent’s tools, acting as universal feature extractors that capture the abstract, human-centric concepts that traditional pipelines structurally cannot.

But the agent does not simply produce any description and hope it is useful. It is driven by a feedback loop grounded in predictive error: the descriptions it generates are used to forecast engagement, those forecasts are compared against real outcomes, and the resulting errors tell the agent exactly how much predictive value its current descriptions are capturing — and how much they are missing. Through successive rounds of this loop, the agent autonomously rewrites the instructions that govern how descriptions are composed, converging on the paragraph structure that maximizes predictive accuracy.

This error-driven process has a profound consequence for explainability. The description the agent converges on is not a generic summary — it is the description that the agent has discovered, through empirical optimization, to be the most predictive of real engagement outcomes. In other words, the features highlighted in the final description are there because they matter — because including them reduced prediction error. When the optimized description of a high-performing post calls out “candid humor,” “golden-hour lighting,” and “influencer credibility,” those aren’t arbitrary observations. They are the factors the agent learned to pay attention to because they measurably improved its ability to predict what performs well.

How the Prediction Optimization Agent Works

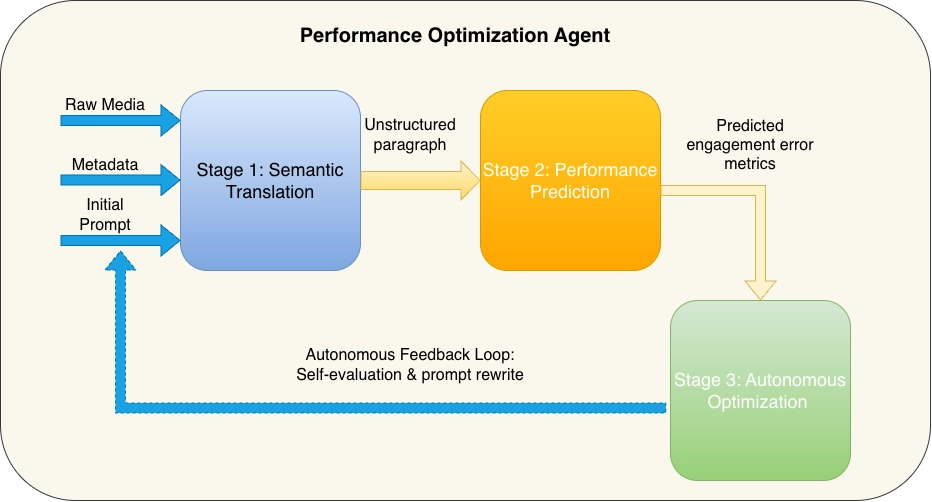

The Prediction Optimization Agent orchestrates three internal stages in a continuous feedback loop: it observes a post, describes it, predicts its performance, measures how far off it was, and then rewrites its own instructions to produce better descriptions next time — closing the loop and getting measurably better with every iteration, without any human intervention.

Figure 2: The Prediction Optimization Agent architecture. Raw media, metadata, and an initial prompt are fed into Stage 1 (Semantic Translation), which produces a natural-language description of the post. Stage 2 (Engagement Predictor) reads that description and predicts engagement. Prediction errors are then passed to Stage 3 (Self-Optimizer), which autonomously analyzes what went wrong and rewrites the Stage 1 prompt — closing the feedback loop and improving the system’s accuracy with every iteration.

Stage 1: Semantic Translation

The agent begins by ingesting the raw post — the image or video file, the caption text, and all available metadata (follower count, posting time, influencer bio, etc.). Using a multimodal LLM as its translation tool, and guided by a detailed set of internal instructions (its prompt), it produces a single, rich natural-language paragraph that captures not just what is in the post, but what the post means: the visual mood, the emotional tone, the relationship between caption and image, and the brand alignment.

The quality and focus of this description is entirely governed by the prompt — and as we will see, it is the prompt that the agent learns to optimize.

Stage 2: The Engagement Predictor

The agent passes the semantic paragraph to its prediction tool — a model that evaluates the post’s potential performance based entirely on the natural-language description from Stage 1.

The predictor can be any machine learning model with the ability to understand text paragraphs. It can be based on trees, deep learning, or any other compatible architecture. It can even be a fine-tuned LLM, upskilled for predictions in a specific domain. Our Agent is compatible with all these options.

In all cases, the quality of the prediction is only as good as the quality of the semantic description. A richer, more targeted paragraph gives the predictor more signal to work with — which is precisely why improving the description is the single most important action the agent can take.

Stage 3: The Self-Optimizer

This is the core of the Prediction Optimization Agent’s intelligence — and what makes it a true agent rather than a static model.

After predictions are generated for a batch of posts and compared against actual engagement outcomes, the agent enters a self-evaluation cycle. The agent is presented with:

- The prompt it used to generate descriptions in the current round.

- The per-post prediction errors — for each post, the predicted engagement versus the actual engagement, and the magnitude and direction of the miss.

- The aggregate error metrics — overall accuracy statistics such as mean absolute error, broken down by content category, audience size, or post type, revealing systematic blind spots.

- The complete history of prior prompts and their corresponding error metrics — so the agent can see not just how it performed this round, but how each previous prompt version performed, which changes helped, and which didn’t.

Armed with this structured feedback, the agent doesn’t guess at improvements — it reasons about them. It asks: Which types of posts am I consistently getting wrong? What do those posts have in common? What dimensions of the content is my current prompt failing to capture?

Based on this analysis, the agent autonomously rewrites the prompt instructions used in Stage 1. The rewritten prompt is not a minor tweak — it can add entirely new analytical dimensions, remove instructions that proved unhelpful, or restructure the description format to foreground the features most correlated with engagement.

The critical insight is that the predictive error is the signal that drives the entire system. Every improvement the agent makes to its prompt is motivated by a concrete, quantifiable gap between what it predicted and what actually happened. The agent isn’t optimizing descriptions to be “better” in some abstract sense — it is optimizing them to be more predictive, and the error metrics tell it exactly where it is falling short.

The Agent in Action: Watching the Prompt Evolve

To make this concrete, let’s follow the agent through three optimization rounds on a single post — the Hype Energy ad featuring two young models in blue athletic wear, photographed from a dramatic low angle against a vivid blue sky lined with palm trees.

Figure 3: Example Instagram post used for semantic translation.

Round 1: The Baseline

The agent starts with a deliberately simple, generic prompt:

Prompt (Round 1): “Analyze this Instagram post and the provided metadata. Describe the visual elements of the image, the style, and the mood. Then, explain how the image relates to the brand or influencer described in the metadata.”

The agent’s description:

“This Instagram post is a high-energy lifestyle advertisement for Hype Energy, utilizing a vibrant, ‘streetwear-meets-summer’ aesthetic to target a young, active demographic. The image uses a low-angle shot, which makes the subjects appear dominant and ‘larger than life.’ Two young models are featured in athletic/leisure wear. The female subject in the foreground holds the product directly toward the lens. The male subject in the background wears reflective ‘matrix-style’ sunglasses and a blue Jordan hoodie. The post aligns perfectly with the brand’s identity as described in the metadata. The visual style is polished and fashionable, positioning the drink as a lifestyle accessory rather than just a functional caffeine source. The caption emphasizes ‘Zero Sugar’ and staying ‘sharp’ and ‘unstoppable.’”

This is already more nuanced than what a traditional CV model would produce — but the predictor, working from this description, significantly underestimates the post’s engagement. The error gets logged. Across the full batch, the agent notices a pattern: it is systematically underperforming on highly produced brand-partnership posts.

Round 2: The Agent Adapts

The self-optimizer reviews the Round 1 errors and identifies the gap: the prompt never asked the agent to evaluate visual cohesion between talent styling and brand identity, or the psychological impact of compositional techniques like camera angle. It rewrites the prompt:

Prompt (Round 2): “Analyze this Instagram post and the provided metadata. Describe the visual elements, style, mood, and composition. Assess the visual cohesion between the talent’s styling (wardrobe, accessories, posture) and the brand’s visual identity. Evaluate how compositional choices — such as camera angle, framing, and subject placement — contribute to the aspirational or emotional tone of the post. Explain how the image, caption, and influencer profile work together to create a unified message.”

The agent’s description now reads:

“This Instagram post is a high-energy lifestyle advertisement for Hype Energy that demonstrates strong visual-brand cohesion. The coordinated blue wardrobe across both models — the female subject’s athletic top and the male subject’s Jordan hoodie — creates a seamless extension of the brand’s blue-and-white visual identity, embedding the product within the scene rather than presenting it as a foreign element. The dramatic low-angle shot elevates the subjects into an aspirational, dominant position, making the viewer look up at them — a compositional choice that reinforces the caption’s message of being ‘unstoppable.’ The female subject holds the product at the camera’s focal point with deliberate confidence, while the male subject’s reflective sunglasses and relaxed posture add an effortless, streetwear credibility. Together, the image, caption, and influencer positioning frame the energy drink not as a beverage but as a lifestyle symbol for a young, fashion-conscious audience.”

The prediction for this post improves noticeably. Across the batch, the error on brand-partnership posts drops. But the agent notices it is still missing on posts where influencer credibility plays a key role — posts where who the influencer is matters as much as what’s in the frame.

Round 3: The Agent Sharpens Further

The self-optimizer reviews Rounds 1 and 2 side by side — seeing that the Round 2 prompt improved brand-cohesion analysis but still lacks attention to influencer authority and audience trust signals. It rewrites again:

Prompt (Round 3): “Analyze this Instagram post and the provided metadata. Describe the visual elements, style, mood, and composition. Assess the visual cohesion between the talent’s styling and the brand’s visual identity. Evaluate how compositional choices contribute to the aspirational or emotional tone. Analyze the influencer’s credibility and authority relative to the product category — consider their professional background, niche expertise, audience demographics, and how these factors amplify or undermine the authenticity of the endorsement. Assess the emotional resonance of the caption: does it use humor, aspiration, relatability, or urgency, and how does this tone interact with the visual content? Explain how all elements — image, caption, influencer identity, and audience context — work together to create a unified, persuasive message.”

The agent’s description:

“This Instagram post is a high-energy lifestyle advertisement for Hype Energy that achieves strong visual-brand cohesion and leverages influencer-audience alignment to maximize credibility. The coordinated blue wardrobe across both models creates a seamless extension of the brand’s visual identity. The dramatic low-angle shot places the subjects in an aspirational, dominant position, reinforcing the caption’s message of being ‘unstoppable.’ The female subject’s direct-to-camera product hold at the image’s focal point conveys deliberate confidence, while the male subject’s reflective sunglasses and relaxed posture add streetwear authenticity. Critically, the influencer’s established presence in the fitness and lifestyle niche — with a predominantly young, health-conscious following — makes the ‘Zero Sugar’ energy drink endorsement feel credible rather than transactional. The caption’s tone (‘Stay sharp. Stay unstoppable.’) uses aspirational brevity that mirrors the visual intensity, creating a tight loop between what the viewer sees and what they read. The overall effect positions the product as an identity marker for an active, fashion-forward audience, rather than a commodity — a framing that this influencer’s audience is primed to respond to.”

This description now captures visual strategy, brand cohesion, compositional psychology, influencer credibility, audience alignment, and caption-image interaction — all in a single readable paragraph. The prediction error drops significantly. And a marketing team reading this description can immediately see why the post works: the credibility is authentic, the visual identity is seamless, and the caption reinforces the image rather than fighting it.

The agent arrived at this level of descriptive richness not because someone hand-engineered the prompt, but because the predictive errors from Rounds 1 and 2 told it exactly what it was missing.

Real-World Application: Lessons and Implications

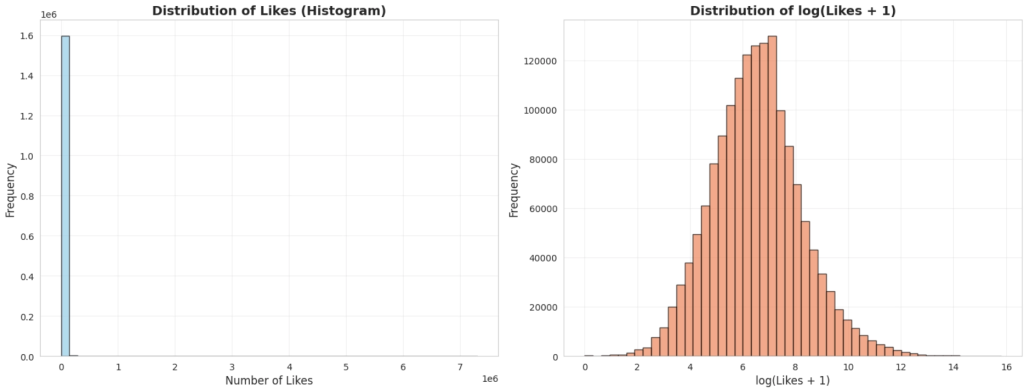

To validate the Prediction Optimization Agent in a real-world scenario, it was tested using an Instagram Influencer Dataset containing approximately 10.18 million posts spanning a diverse range of content categories and audience sizes. The results revealed key insights about both the agent’s learning dynamics and the practical implications for marketing teams.

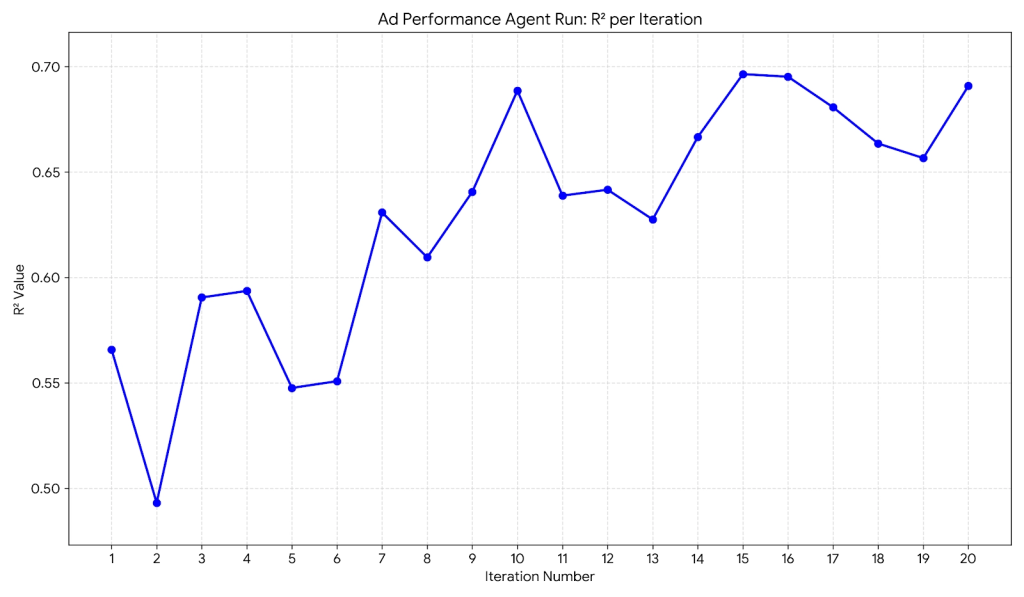

The Agent Learns What Matters — Autonomously

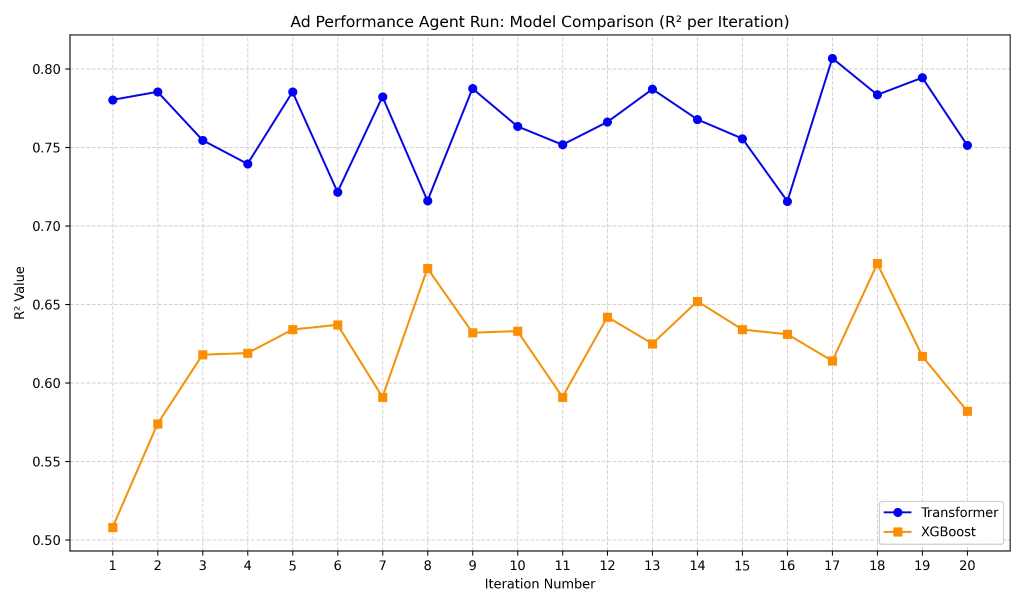

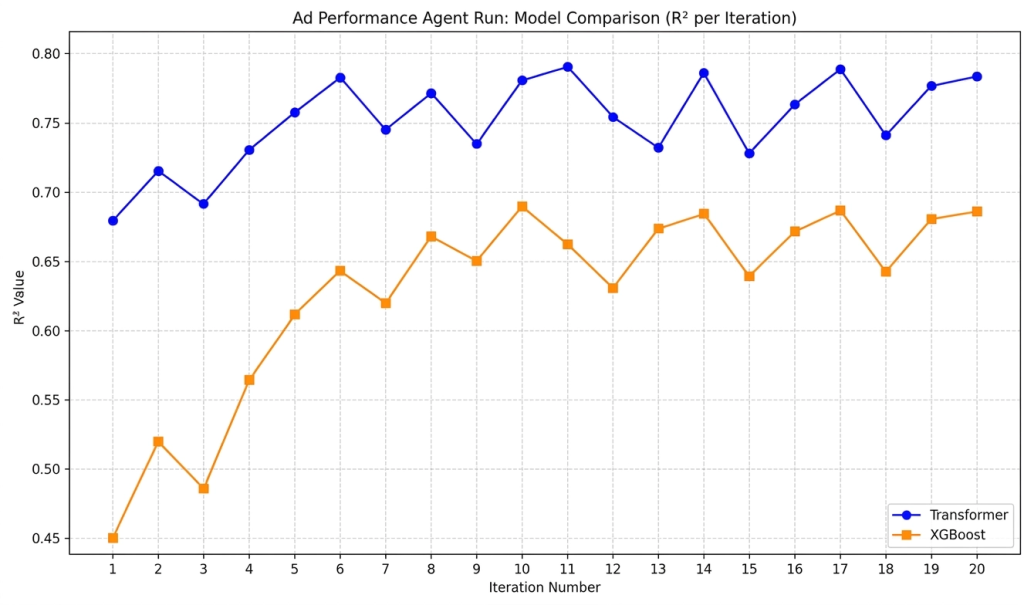

By processing its own historical error rates, the Prediction Optimization Agent autonomously learned to rewrite its prompts — producing richer, more targeted post descriptions with every iteration, which in turn drove increasingly accurate predictions.

Figure 4 — Autonomous Learning: The chart tracks the agent’s predictive performance (y-axis) across successive optimization rounds (x-axis). Each point represents a full cycle of the agent’s loop: describe → predict → evaluate → rewrite. The trend demonstrates that as the agent iteratively refined its own prompt — guided by quantitative error metrics from prior rounds — forecast accuracy improved consistently and autonomously, without any human prompt engineering.

The agent’s optimization works by feeding it the complete history of prior prompts alongside rigorous, quantitative error breakdowns from every previous round. Armed with this granular self-knowledge, the agent identifies precisely which content dimensions it has been under-analyzing — production quality, humor style, credibility signals, visual-brand cohesion — and surgically updates its prompt to capture those features in subsequent iterations. Each round’s prompt is built on the empirical lessons of every round before it, producing a compounding improvement curve where the descriptions become progressively more predictive and, as a direct consequence, more explanatory.

Conclusions

The Prediction Optimization Agent demonstrates something that extends well beyond social media: natural-language prompts can be treated as tunable parameters, optimized autonomously by the AI itself. By allowing the agent to refine its own instructions through predictive error, the system progressively discovers what drives human engagement and expresses that knowledge in plain language.

For marketing teams, this is a significant departure from opaque AI tools that output a score and leave everyone guessing. When a team wants to understand why one campaign outperforms another, they don’t need to interpret a dashboard of abstract model coefficients. They can compare the text profiles of a high-performing post and a low-performing one, side by side, and immediately see the differences the AI picked up on: one might highlight “authentic, candid composition with humor-driven caption and strong influencer-niche alignment,” while the other notes “generic studio shot with formulaic promotional language and weak audience-brand fit.” The patterns reveal themselves in plain English, and they are the right patterns, because the agent discovered them by optimizing for predictive accuracy.

In practice, this means teams can run draft campaign concepts through the system before committing production and media budgets, getting a readable assessment of how the AI interprets the creative. Designers and copywriters can test variations of a post and compare descriptions side by side to see, in their own language, which direction resonates more strongly. And by normalizing visual and written media into a unified, readable format, brands can pair creative intuition with precise forecasting, treating creative assets as predictable drivers of revenue rather than expensive gambles.

The same architectural pattern, semantic translation, error-driven prediction, and autonomous self-optimization, is not limited to social media. Any domain where success depends on understanding the interplay of qualitative and quantitative signals, from political messaging to product design to entertainment, stands to benefit from systems that can read, reason, reflect, and improve on their own. The question is no longer whether AI can predict what resonates with people. It is whether we are ready to let it teach itself how.