Meet Your New Data Guardian

1. The High Cost of “Dirty” Data

In the modern enterprise, data is the raw ingredient behind every strategic decision. Think of it like a premier restaurant: the Data Engineer is the sous-chef, meticulously sourcing and preparing ingredients, while the Data Scientist is the executive chef, transforming them into the predictive models and insights that drive the business forward. If the ingredients are spoiled or mislabelled, the final dish fails, no matter how talented the chef.

Across several of our AI initiatives at WPP, we uncovered a pattern that was quietly draining velocity from our most ambitious projects. Our “sous-chefs”, skilled data engineers responsible for pipeline integrity, were spending up to one full day per week on tedious, largely manual Quality Assurance (QA) of data flowing into BigQuery. Row by row, column by column, they checked for missing values, logical contradictions, and phantom duplicates, work that was essential but deeply repetitive.

This wasn’t just an inconvenience. It was a strategic bottleneck: it slowed the delivery of every downstream AI application, consumed senior engineering talent on janitorial tasks, and most dangerously created risk. When a human eye is the only safeguard between raw data and a production model, errors don’t just slip through occasionally. They slip through systematically, at exactly the moments when the data is most complex and the engineer is most fatigued.

We asked ourselves a different question: What if, instead of building another dashboard or writing another validation script, we built an intelligent agent, one that could reason about data quality the way an experienced engineer does, learn from every audit it performs, and get better over time?

This article describes how we built that agent, what makes it fundamentally different from traditional automation, and what happened when we put it to the test.

2. The Problem: Why Data Quality Demands More Than Scripts

The Data & The Modeling Ecosystem

The agent operates on digital marketing campaign performance data hosted in BigQuery, massive tables that track how advertising campaigns perform on a daily basis across major ad networks like Meta (Facebook and Instagram). Each row represents a highly granular intersection of a specific campaign, audience segment, platform, device, and creative asset. This data captures everything from broad identifiers—like the parent brand and geographical targeting—down to precise performance metrics, including impressions, clicks, daily spend, conversions, leads, and app installs.

This foundational data is the lifeblood of two critical machine learning systems:

- The Prediction Model: A classification system designed to predict whether a planned campaign will yield a negative, neutral, or positive outcome.

- The Recommendation System: A highly flexible advisory engine capable of handling any combination of “missing modalities.” For example, if a media planner inputs a specific Brand, Target Audience, and Location, the system dynamically recommends the optimal missing parameters, such as the best platform to use and the most effective creative asset to deploy.

For more background on the broader modeling context, see From Guesswork to Glimpse: How AI is Predicting the Future of Marketing Campaigns.

The Silent Threat of Data Corruption

Because these models directly inform real-world media spend and strategic campaign planning, their accuracy is paramount. The underlying data is regularly refreshed directly from the advertising platforms to keep the models up to date. However, this automated refresh process frequently introduces subtle corruption and systemic inconsistencies.

For instance, while metrics like engagement and clicks generally remain stable, downstream pipeline issues frequently render conversions and awareness metrics unreliable (“not high quality”). At the individual row level, these anomalies are often entirely invisible. But at scale, they are devastating. If left unchecked, these untrustworthy data points bleed into the training sets, silently degrading the prediction model’s accuracy and causing the recommendation engine to suggest sub-optimal, expensive campaign configurations. This makes rigorous, automated data quality validation not just a nice-to-have, but an absolute necessity for the ecosystem to function.

The Failure Modes

The scale and velocity of data flowing into BigQuery mean that errors don’t announce themselves. They hide. Through our manual QA process, we catalogued six recurring failure modes, each one capable of silently degrading every model built on top of the data:

| Failure Mode | What Happens | Why It Matters |

|---|---|---|

| Missing Values | Fields arrive empty — sometimes 5% of a column, sometimes 40% | Models trained on incomplete data learn incomplete patterns. Forecasts drift silently. |

| Outliers | A metric reads 200,000 clicks when the true value is 500 | A single extreme value can skew an entire model’s calibration, distorting spend recommendations. |

| Duplicate Rows | Identical records appear multiple times | Inflated counts cascade into inflated budgets. Campaigns appear to outperform reality. |

| Categorical Corruption | A brand name like "Nike" is replaced with "zX9pQ" | Segmentation breaks. Reports attribute performance to entities that don’t exist. |

| Logical Inconsistencies | More clicks than impressions. Spend recorded against zero impressions. | These are the most insidious — each value looks valid in isolation, but the relationships between them violate business reality. |

| Missing Columns | An entire field disappears from a refresh | Downstream pipelines fail or, worse, silently fall back to defaults. |

A static validation script can catch some of these — the easy ones, the ones you’ve already seen. But scripts are brittle: they encode yesterday’s assumptions and break on tomorrow’s edge case. They cannot reason about why a pattern looks wrong, weigh it against historical context, or decide whether a recurring anomaly is a genuine error or a known artifact of a data source.

That requires judgment. And judgment is what we built the agent to provide.

3. Our Approach: An Agent That Reasons, Remembers, and Improves

We designed the Data Quality Assurance Agent — not as a script, not as a dashboard, but as a reasoning entity capable of planning an audit strategy, querying data, forming hypotheses about its health, testing those hypotheses, and learning from the results. The distinction matters. A script checks what you tell it to check. An agent decides what to check, based on what it knows and it has the tools to act on that decision end-to-end.

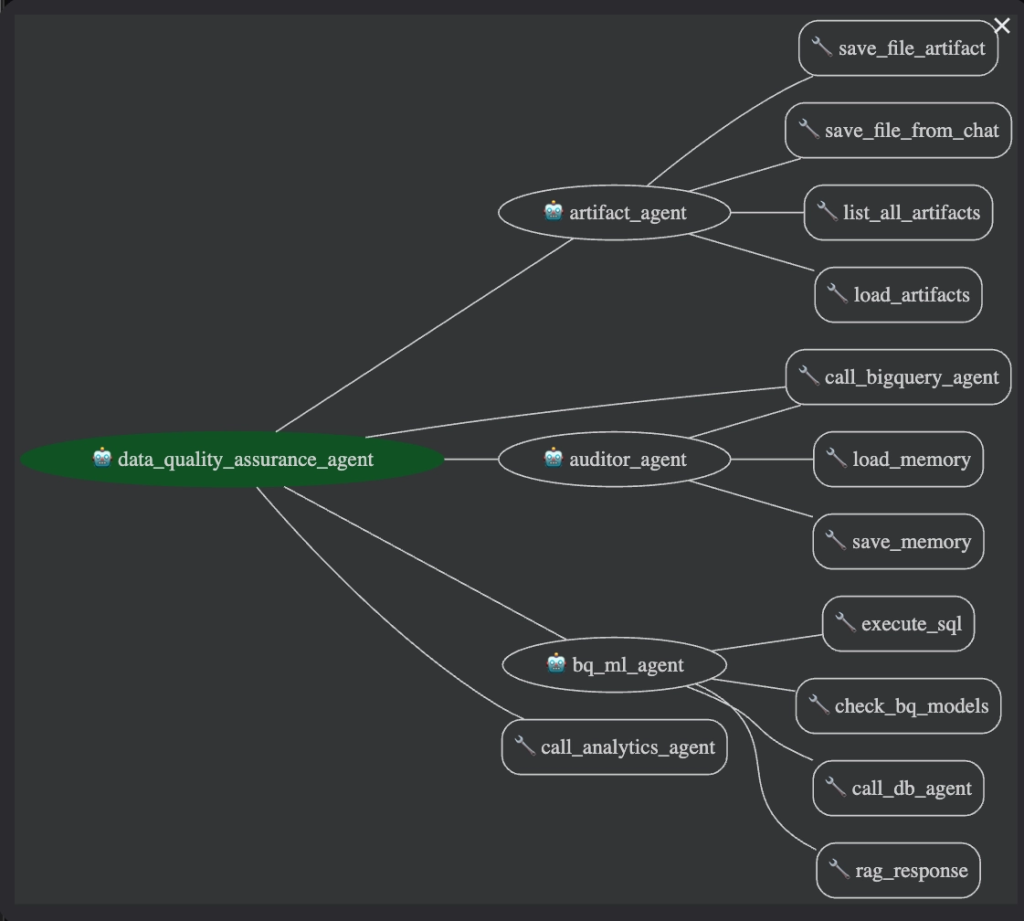

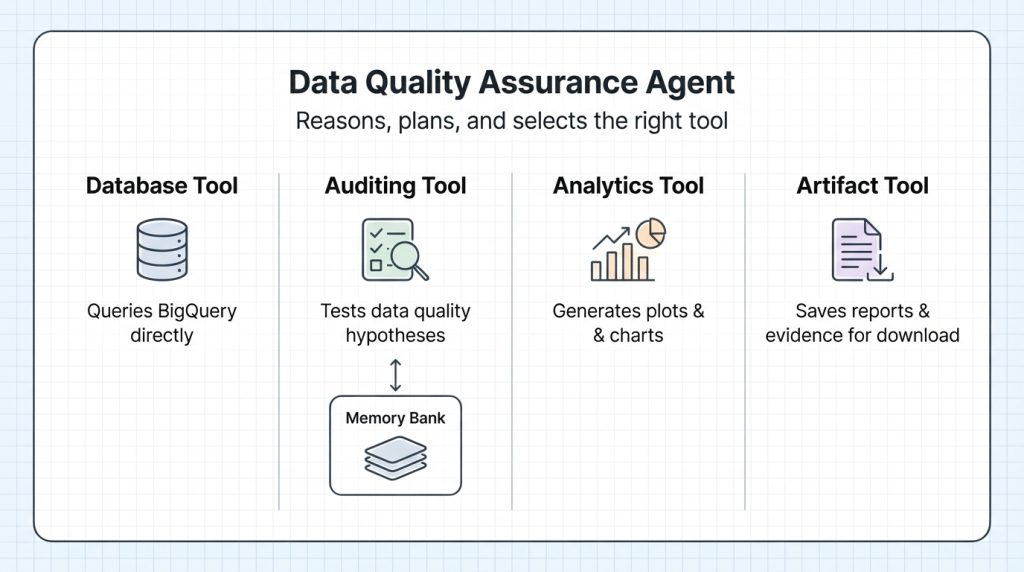

Architecture: One Agent, Specialized Tools

The agent is powered by a single reasoning core that plans, decides, and acts. What gives it breadth is its toolkit, a set of specialized capabilities it can invoke as needed, selecting the right tool for each step of the audit:

- Database Tool: enables the agent to query BigQuery directly, fetching schemas, row counts, column statistics, and raw data samples.

- Auditing Tool: the agent’s analytical engine. It formulates hypotheses about potential quality issues, runs targeted checks, and compiles structured findings. This tool reads from and writes to the Memory Bank.

- Analytics Tool: generates visualizations using Python — charts, distributions, and plots that make audit findings immediately legible to stakeholders.

- Artifact Tool: packages the final audit report, charts, and evidence into downloadable artifacts stored in Google Cloud.

The agent orchestrates these tools autonomously. When a user asks it to audit a table, the agent formulates a plan, queries the data, runs its checks, generates visualizations where useful, and compiles a structured report, all without the user needing to specify which tool to use or in what order.

The Key Innovation: Long-Term Memory

Most AI tools are stateless. When the session ends, everything the system learned disappears. The next audit starts from zero. This is the fundamental limitation we set out to break. The agent maintains a persistent Memory Bank, a long-term knowledge store that survives across sessions and accumulates institutional intelligence over time. This memory captures three categories of knowledge:

- Historical Explanations When a data engineer confirms that a recurring anomaly is caused by a known tracking limitation or data source quirk, the agent records that explanation. The next time it encounters the same pattern, it doesn’t waste time flagging it as a new issue, it references the known cause, notes it in the report, and moves on to genuinely novel problems.

- Business Context Over successive audits, the agent absorbs the specific rhythms and patterns of our marketing data, seasonal spikes, platform-specific reporting delays, expected variance ranges for different campaign types. This contextual awareness allows it to distinguish between a real anomaly and normal business variation.

- Evolutionary Learning With every audit, the agent’s knowledge base deepens. Instead of repeating the same blind checks, it refines its hypotheses based on what it has seen before — which columns tend to have issues, which tables are most prone to duplication, which logical inconsistencies recur. The agent doesn’t just run. It compounds.

This is what separates an agent from a script. A script executes the same logic every time, regardless of history. The agent carries forward everything it has learned and every audit it performs makes the next one sharper.

The Tech Stack

To ensure the agent was enterprise-grade, we built on the full Google Cloud AI ecosystem:

| Component | Role |

|---|---|

| Vertex AI Agent Engine | Manages the agent’s long-term specific memory persistence, and saving of the chat sessions |

| BigQuery | The single source of truth — the agent performs direct, in-place auditing against production tables |

| Agent Development Kit (ADK) | The framework used to define the agent’s tools, constraints, and interaction boundaries |

| Google Cloud Storage | Persistent storage for audit trails, PDF reports, and visual evidence |

| Cloud Runs | Used to deploy the A2A Agent API, and the ADK Web UI for demo purposes |

| A2A | The protocol to expose our Agent as a headless API |

4. Proving It Works: Synthetic Error Injection

We didn’t hope the agent worked. We proved it using a controlled methodology we call Synthetic Error Injection. The premise is straightforward: take a perfectly clean dataset, intentionally corrupt it in specific, measurable ways, and then challenge the agent to find every error we planted. If the agent can detect artificially injected errors, whose exact type, location, and severity we control, we can be confident it will handle real-world data corruption, which is typically far less extreme.

Step 1: Preparing the Test Data

Before injecting errors, we prepare the data for safe, controlled experimentation:

- Anonymization — Real brand and advertiser names are replaced with generic identifiers (

"Brand 1","Company A"). Sensitive business information never enters the test environment. - Corruption— The dataset then receives a different severity level of corruption. This allows us to map the agent’s detection accuracy as a function of error density, from subtle (5%) to extreme (40%).

Step 2: Injecting Controlled Errors

Using purpose-built scripts, we introduce precisely calibrated corruptions into a table, 4 types of Structural and 7 types of Logical errors:

| Category | Error | Description |

|---|---|---|

| Structural | Missing Values (Nulls) | X% of cells set to NULL |

| Structural | Duplicate Rows | X% exact row copies |

| Structural | Dropped Columns | X% of columns removed |

| Structural | Categorical Errors | Random alphanumeric strings in category fields |

| Logical | Clicks > Impressions | Can’t click what wasn’t shown |

| Logical | Conversions > Clicks | Can’t convert without clicking |

| Logical | Spend with 0 Impressions | Paying for zero ad delivery |

| Logical | Video Completions > Plays | Can’t finish without starting |

| Logical | Purchases without Add-to-Cart | Funnel step skipped |

| Logical | Landing Page Views > Clicks | More landings than clicks |

| Logical | Negative Metric Values | Performance metrics can’t be negative |

Step 3: Synthetic Ground Truth Dataset

We keep track of the errors we introduce in a table and produce a ground truth dataset that looks like:

| Table_name | number_of_injected_logical_errors | type_of_logical_error | number_of_injected_structural_errors | type_of_structural_error |

|---|---|---|---|---|

| table_01 | 0 | – | 1 | categorical errors |

| table_02 | 0 | – | 1 | dropped columns |

| table_03 | 1 | clicks_exceed_impressions | 0 | – |

| table_04 | 1 | spend_with_zero_impressions | 0 | – |

5. Evaluation Pipeline, Experiments and Results

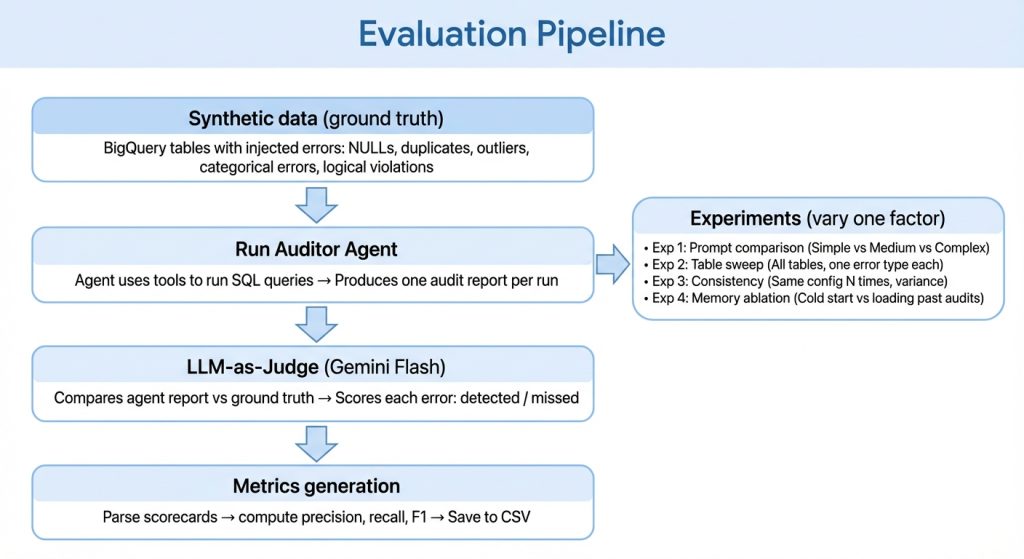

To evaluate our Agent we follow the pipeline below:

The 4 Experiments and Results

Each experiment isolates a single variable to understand what affects the auditor agent’s detection quality.

Experiment 1: Prompt Comparison

Question: Does giving the agent a more detailed prompt improve error detection?

Runs the agent 3 times on the same table, each time with a different user query style:

| Prompt Level | What the user asks | Agent’s system instruction |

|---|---|---|

| Simple | “Check if there are any errors for table X” | Stays constant (forensic mode) |

| Medium | “Perform a structured assessment checking physical integrity, numerical sanity, categorical validity” | Stays constant |

| Complex | “Conduct a forensic audit checking for 11 specific error types with detailed cross-column logical checks” | Stays constant |

Key insight from results: Only the complex prompt successfully detected the injected

spend_with_zero_impressionserror (139 rows, 1.82%), while both the simple and medium prompts missed it entirely — confirming that more detailed, forensic-style instructions are critical for the agent to test nuanced logical relationships rather than just surface-level checks.

Experiment 2: Table Sweep

Question: How well does the agent detect different types of errors?

Experiment 2 stress-tests the Auditor agent (using the complex prompt) on 11 synthetic BigQuery tables with progressively stacked error combinations, ranging from a single logical violation to the full set of 7 logical plus 4 structural error types (11 total). The agent achieved perfect detection on 8 of 11 tables (72.7% with F1 = 1.0) and delivered strong overall performance (precision 0.905, recall 0.864, F1 0.883).

Experiment 3: Consistency Check

Question: If we run the exact same audit N times, do we get the same results?

Runs the identical configuration multiple times (default: 3).

The Consistency Experiment demonstrated that the auditor agent achieves perfect 100% reproducibility, consistently detecting both injected errors and real data quality issues with the exact same metrics and explanations across all three runs. This deterministic performance confirms that the agent’s complex prompt configuration is highly reliable effectively eliminating the need for redundant audits.

Experiment 4: Memory Ablation

Question: Does loading past audit memories help the agent perform better?

Runs the agent twice on the same table with the complex prompt:

| Condition | What happens |

|---|---|

| No Memory | Cold start — agent has no context from prior audits |

| With Memory | Agent loads past findings from Vertex AI memory before auditing |

Key insight from results: Memory doesn’t unlock new capabilities when tables contain simple structural errors or when the user prompt leads to a very detailed auditing but acts as a critical consistency enforcer, stabilizing the audit scope to guarantee a 100% success rate. However, in cases that there are many different logical errors in a table and the prompt is simple, when past memories are loaded into the agent’s present context performance becomes optimal.

6. The Agent in Action

Below is an actual interaction with the deployed agent, demonstrating its reasoning in practice. The agent discovers available tables, loads its accumulated memory from prior audits, and then, guided by that memory, conducts a targeted audit.

Discovery

Human: “What tables do you have access to?”

Agent: “I have access to the following tables in the dataset:

campaign_performance_table_1campaign_performance_table_2campaign_performance_table_3campaign_performance_table_4campaign_performance_table_5

Memory Recall

Human: “Load your memories.”

Agent: “I have loaded the following memories:

- A general instruction to always check for null values in tables.

- A specific data quality issue in

campaign_performance_table_2where 188 rows (18.8%) were duplicates based onevent_date,campaign_id, andad_creative_id.- A preference for reports to be formatted in Markdown and include an executive summary.”

The agent doesn’t start from scratch. It arrives with institutional knowledge — prior findings, user preferences, and standing instructions — all retrieved from its persistent Memory Bank.

Hypothesis-Driven Audit

Human: “Based on these memories, audit

campaign_performance_table_3.”

The agent formulates a plan, queries the table using its database tool, and — informed by its memories — produces a structured audit report:

Executive Summary: This audit revealed a consistent pattern of missing values across key columns, affecting exactly 5% of total rows. Specifically,

event_date,campaign_id,impressions,clicks, andconversionseach had 50 null entries out of 1,000 rows. No exact duplicate rows were found. This suggests a systemic issue with data completeness rather than random omissions.

The agent then provides detailed findings per column — quantifying impact, assessing severity, and recommending next steps — before offering to proceed with outlier detection, categorical validation, and logical consistency checks.

Persistent Learning

Human: “Save the results for this table.”

Agent: “The audit results have been saved to memory.”

The next time the agent audits any table, it will carry forward what it learned here — the 5% missing-value pattern, the columns affected, the systemic nature of the issue. Every audit makes the next one faster, more targeted, and more informed.

7. Impact and What Comes Next

Operational Impact

The deployment of the Data Quality Assurance Agent has delivered measurable results:

- Reclaimed engineering capacity. By automating the bulk of manual QA, data engineers have shifted from repetitive inspection work to higher-value activities.

- Earlier error detection. Quality issues are now caught at the point of ingestion, before they propagate into downstream models. This “shift-left” approach reduces the blast radius of bad data from hours to minutes.

- Higher model reliability. Marketing agents, analytics pipelines, and machine learning models now operate on data that has been systematically validated, reducing the risk of predictions and recommendations built on flawed foundations.

The Bigger Picture

This agent is more than a tool. It is a blueprint for autonomous data governance, a pattern that can be replicated across any data pipeline where quality, scale, and velocity collide.

We are currently extending the agent along three axes:

- Cross-table auditing: enabling the agent to detect inconsistencies across related datasets, not just within a single table. Many of the most damaging data quality issues manifest as contradictions between tables that individually look clean.

- Event-driven execution: triggering the agent automatically whenever a BigQuery table is updated, transforming data quality monitoring from a scheduled chore into a continuous, always-on safeguard.

- Adversarial stress-testing: today, our synthetic error injection is script-based and manually configured. We are building a dedicated adversarial agent whose sole purpose is to generate increasingly complex, realistic data corruptions, subtle logical contradictions, plausible-looking outliers, correlated missing-value patterns, specifically designed to challenge the QA agent’s detection capabilities. By putting one agent against the other in a continuous red-team / blue-team loop, both improve: the adversarial agent learns to craft harder-to-detect errors, and the QA agent learns to catch them, driving each other toward sharper, more robust performance over time.

Together, these extensions move us toward a future where data quality monitoring is not a task that consumes an engineer’s day. It is a capability the agent handles continuously and intelligently, surfacing only the issues that require human judgment and decision-making.